Les 6. Heteroscedasticity

OLS: Ordinary Least Squares

Technique to determine the “best” curve through a scatter diagram

Regression: Y i= β^ 0 + β^ 1 X 1 i+ …+ ^β k X ki + u^ i

(Implied) Causality from right to left

Separate the random component from the systematic component

N 2

How? Ordinary Least Squares : arg min Σ i=1 ui

β

Why squares?

o Work with positive values

o Easy to compute derivatives

o Reweights large deviations (ui >1 increases in ∎ 2/ ui <1

decreases in ∎ 2)

Results

^ β j : estimated slope parameter effect of X on Y

2

2 σu

σ β= 2 2

: standard error uncertainty around the effect estimate

σ X (1−r X )

OLS estimate is BLUE (Best Linear Unbiased Estimator)

⇔ if Gauss-Markov Assumptions are satisfied.

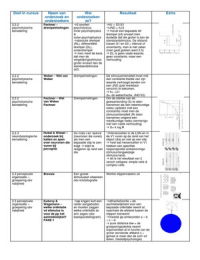

The Classical Assumptions Stu, Ch. 4 aka. Gauss-Markov

Assumptions

Gauss-Markov Assumptions:

1. The regression model is linear in its parameters, is correctly specified, and

has an additive error term

2. All explanatory variables are uncorrelated with the error term (no

endogeneity)

3. Observations of the error term are uncorrelated with each other over time

(no serial correlation)

4. The error term has a constant variance (no heteroskedasticity)

5. No explanatory variable is a perfect linear function of any other

explanatory variable(s) (no perfect multicollinearity)

6. The error term is normally distributed with zero mean.

Assumption 1 and 2

Correct Model Specification =

Complete specification

Underfitting: omitted variable bias

Biased parameters: wrong effects (+ endogeneity)

Biased standard error: unreliable inference

Overfitting:

High risk of inflated standard errors (multicollinearity)

Correct functional form: linear in its parameters

Polynomials Detect and Compute Minima and/or Maxima

Logarithmic effects Interpretation in percentages

Detection: visuals (avplots) and statistics (Ramsey RESET-test)

∂y

Effect interpretation ‘marginal effects’ β j =

∂ xj

• Raw variables unit changes

1

• Standardized variables changes in ‘standard deviation from the mean’

• Log-transformed variables percentage changes

, Before anything of this applies: !!! First know your data !!!

Good results depend on good data

Ex ante:

Find mistakes and extreme values

Ex post:

Check for outliers and/or influential values

Visuals: rvfplot, avplot(s)

Standardized DfBeta(s): |SDfBeta|>1

Studentized Residuals: |Stud . Residual|>3

Correct errors

Delete observation

Extreme Case Dummy

Assumption 6

Normality Assumption

Not necessary for OLS-estimation

Necessary for Hypothesis testing Statistical Inference

Statistical Inference

From Sample to Population

Null Hypothesis Testing => see procedure: 5 steps

Under the null, how likely are my results?

Null hypothesis implies a restriction

Joint F-test = parameters simultaan gelijk aan 0 stellen.

CHOW-test = zijn er structuurbreuken? Structurele

veranderingen gegeven 2 groepen/ tijdsmomenten? Wat is

de impact?

Assumption 5

Multicollinearity

2

OLS: Ordinary Least Squares

Technique to determine the “best” curve through a scatter diagram

Regression: Y i= β^ 0 + β^ 1 X 1 i+ …+ ^β k X ki + u^ i

(Implied) Causality from right to left

Separate the random component from the systematic component

N 2

How? Ordinary Least Squares : arg min Σ i=1 ui

β

Why squares?

o Work with positive values

o Easy to compute derivatives

o Reweights large deviations (ui >1 increases in ∎ 2/ ui <1

decreases in ∎ 2)

Results

^ β j : estimated slope parameter effect of X on Y

2

2 σu

σ β= 2 2

: standard error uncertainty around the effect estimate

σ X (1−r X )

OLS estimate is BLUE (Best Linear Unbiased Estimator)

⇔ if Gauss-Markov Assumptions are satisfied.

The Classical Assumptions Stu, Ch. 4 aka. Gauss-Markov

Assumptions

Gauss-Markov Assumptions:

1. The regression model is linear in its parameters, is correctly specified, and

has an additive error term

2. All explanatory variables are uncorrelated with the error term (no

endogeneity)

3. Observations of the error term are uncorrelated with each other over time

(no serial correlation)

4. The error term has a constant variance (no heteroskedasticity)

5. No explanatory variable is a perfect linear function of any other

explanatory variable(s) (no perfect multicollinearity)

6. The error term is normally distributed with zero mean.

Assumption 1 and 2

Correct Model Specification =

Complete specification

Underfitting: omitted variable bias

Biased parameters: wrong effects (+ endogeneity)

Biased standard error: unreliable inference

Overfitting:

High risk of inflated standard errors (multicollinearity)

Correct functional form: linear in its parameters

Polynomials Detect and Compute Minima and/or Maxima

Logarithmic effects Interpretation in percentages

Detection: visuals (avplots) and statistics (Ramsey RESET-test)

∂y

Effect interpretation ‘marginal effects’ β j =

∂ xj

• Raw variables unit changes

1

• Standardized variables changes in ‘standard deviation from the mean’

• Log-transformed variables percentage changes

, Before anything of this applies: !!! First know your data !!!

Good results depend on good data

Ex ante:

Find mistakes and extreme values

Ex post:

Check for outliers and/or influential values

Visuals: rvfplot, avplot(s)

Standardized DfBeta(s): |SDfBeta|>1

Studentized Residuals: |Stud . Residual|>3

Correct errors

Delete observation

Extreme Case Dummy

Assumption 6

Normality Assumption

Not necessary for OLS-estimation

Necessary for Hypothesis testing Statistical Inference

Statistical Inference

From Sample to Population

Null Hypothesis Testing => see procedure: 5 steps

Under the null, how likely are my results?

Null hypothesis implies a restriction

Joint F-test = parameters simultaan gelijk aan 0 stellen.

CHOW-test = zijn er structuurbreuken? Structurele

veranderingen gegeven 2 groepen/ tijdsmomenten? Wat is

de impact?

Assumption 5

Multicollinearity

2